Google Cloud Next 2024: Everything announced so far | TechCrunch

Google’s Cloud Next 2024 event takes place in Las Vegas through Thursday, and that means lots of new cloud-focused news on everything from Gemini, Google’s AI-powered chatbot, to AI to devops and security. Last year’s event was the first in-person Cloud Next since 2019, and Google took to the stage to show off its ongoing dedication to AI with its Duet AI for Gmail and many other debuts, including expansion of generative AI to its security product line and other enterprise-focused updates and debuts.

Don’t have time to watch the full archive of Google’s keynote event? That’s OK; we’ve summed up the most important parts of the event below, with additional details from the TechCrunch team on the ground at the event. And Tuesday’s updates weren’t the only things Google made available to non-attendees — Wednesday’s developer-focused stream started at 10:30 a.m. PT.

Google Vids

Leveraging AI to help customers develop creative content is something Big Tech is looking for, and Tuesday, Google introduced its version. Google Vids, a new AI-fueled video creation tool, is the latest feature added to the Google Workspace.

Here’s how it works: Google claims users can make videos alongside other Workspace tools like Docs and Sheets. The editing, writing and production is all there. You also can collaborate with colleagues in real time within Google Vids. Read more

Gemini Code Assist

After reading about Google’s new Gemini Code Assist, an enterprise-focused AI code completion and assistance tool, you may be asking yourself if that sounds familiar. And you would be correct. TechCrunch Senior Editor Frederic Lardinois writes that “Google previously offered a similar service under the now-defunct Duet AI branding.” Then Gemini came along. Code Assist is a direct competitor to GitHub’s Copilot Enterprise. Here’s why

And to put Gemini Code Assist into context, Alex Wilhelm breaks down its competition with Copilot, and its potential risks and benefits to developers, in the latest TechCrunch Minute episode.

Google Workspace

Image Credits: Google

Among the new features are voice prompts to kick off the AI-based “Help me write” feature in Gmail while on the go. Another one for Gmail includes a way to instantly turn rough email drafts into a more polished email. Over on Sheets, you can send out a customizable alert when a certain field changes. Meanwhile, a new set of templates make starting a new spreadsheet easier. For the Doc lovers, there is support for tabs now. This is good because, according to the company, you can “organize information in a single document instead of linking to multiple documents or searching through Drive.” Of course, subscribers get the goodies first. Read more

Google also seems to have plans to monetize two of its new AI features for the Google Workspace productivity suite. This will look like $10/month/user add-on packages. One will be for the new AI meetings and messaging add-on that takes notes for you, provides meeting summaries and translates content into 69 languages. The other is for the introduced AI security package, which helps admins keep Google Workspace content more secure. Read more

Imagen 2

In February, Google announced an image generator built into Gemini, Google’s AI-powered chatbot. The company pulled it shortly after it was found to be randomly injecting gender and racial diversity into prompts about people. This resulted in some offensive inaccuracies. While we waited for an eventual re-release, Google came out with the enhanced image-generating tool, Imagen 2. This is inside its Vertex AI developer platform and has more of a focus on enterprise. Imagen 2 is now generally available and comes with some fun new capabilities, including inpainting and outpainting. There’s also what Google’s calling “text-to-live images” where you can now create short, four-second videos from text prompts, along the lines of AI-powered clip generation tools like Runway, Pika and Irreverent Labs. Read more

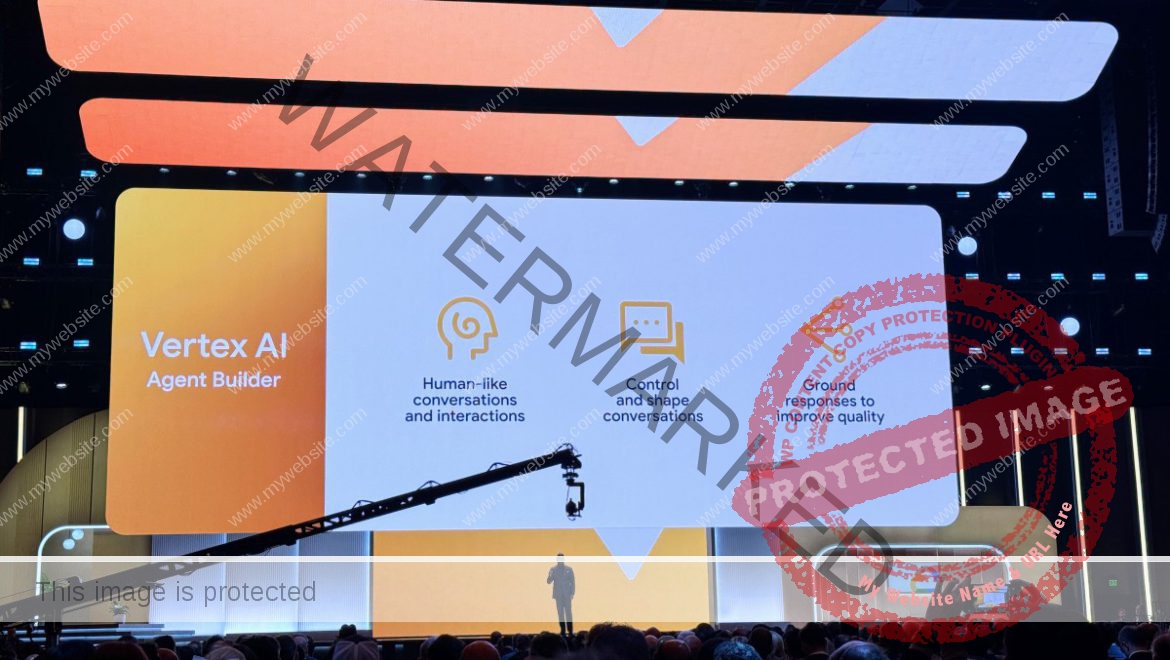

Vertex AI Agent Builder

We can all use a little bit of help, right? Meet Google’s Vertex AI Agent Builder, a new tool to help companies build AI agents.

“Vertex AI Agent Builder allows people to very easily and quickly build conversational agents,” Google Cloud CEO Thomas Kurian said. “You can build and deploy production-ready, generative AI-powered conversational agents and instruct and guide them the same way that you do humans to improve the quality and correctness of answers from models.”

To do this, the company uses a process called “grounding,” where the answers are tied to something considered to be a reliable source. In this case, it’s relying on Google Search (which in reality could or could not be accurate). Read more

Gemini comes to databases

Google calls Gemini in Databases a collection of features that “simplify all aspects of the database journey.” In less jargony language, it’s a bundle of AI-powered, developer-focused tools for Google Cloud customers who are creating, monitoring and migrating app databases. Read more

Google renews its focus on data sovereignty

Image Credits: MirageC / Getty Images

Google has offered cloud sovereignties before, but now it is focused more on partnerships rather than building them out on their own. Read more

Security tools get some AI love

Image Credits: Getty Images

Google jumps on board the productizing generative AI-powered security tool train with a number of new products and features aimed at large companies. Those include Threat Intelligence, which can analyze large portions of potentially malicious code. It also lets users perform natural language searches for ongoing threats or indicators of compromise. Another is Chronicle, Google’s cybersecurity telemetry offering for cloud customers to assist with cybersecurity investigations. The third is the enterprise cybersecurity and risk management suite Security Command Center. Read more

Nvidia’s Blackwell platform

One of the anticipated announcements is Nvidia’s next-generation Blackwell platform coming to Google Cloud in early 2025. Yes, that seems so far away. However, here is what to look forward to: support for the high-performance Nvidia HGX B200 for AI and HPC workloads and GB200 NBL72 for large language model (LLM) training. Oh, and we can reveal that the GB200 servers will be liquid-cooled. Read more

Chrome Enterprise Premium

Meanwhile, Google is expanding its Chrome Enterprise product suite with the launch of Chrome Enterprise Premium. What’s new here is that it mainly pertains mostly to security capabilities of the existing service, based on the insight that browsers are now the endpoints where most of the high-value work inside a company is done. Read more

Gemini 1.5 Pro

Image Credits: Google

Everyone can use a “half” every now and again, and Google obliges with Gemini 1.5 Pro. This, Kyle Wiggers writes, is “Google’s most capable generative AI model,” and is now available in public preview on Vertex AI, Google’s enterprise-focused AI development platform. Here’s what you get for that half: The amount of context that it can process, which is from 128,000 tokens up to 1 million tokens, where “tokens” refers to subdivided bits of raw data (like the syllables “fan,” “tas” and “tic” in the word “fantastic”). Read more

Open source tools

Image Credits: Getty Images

At Google Cloud Next 2024, the company debuted a number of open source tools primarily aimed at supporting generative AI projects and infrastructure. One is Max Diffusion, which is a collection of reference implementations of various diffusion models that run on XLA, or Accelerated Linear Algebra, devices. Then there is JetStream, a new engine to run generative AI models. The third is MaxTest, a collection of text-generating AI models targeting TPUs and Nvidia GPUs in the cloud. Read more

Axion

Image Credits: Google

We don’t know a lot about this one, however, here is what we do know: Google Cloud joins AWS and Azure in announcing its first custom-built Arm processor, dubbed Axion. Frederic Lardinois writes that “based on Arm’s Neoverse 2 designs, Google says its Axion instances offer 30% better performance than other Arm-based instances from competitors like AWS and Microsoft and up to 50% better performance and 60% better energy efficiency than comparable X86-based instances.” Read more

The entire Google Cloud Next keynote

If all of that isn’t enough of an AI and cloud update deluge, you can watch the entire event keynote via the embed below.

Google Cloud Next’s developer keynote

On Wednesday, Google held a separate keynote for developers. They offered a deeper dive into the ins and outs of a number of tools outlined during the Tuesday keynote, including Gemini Cloud Assist, using AI for product recommendations and chat agents, ending with a showcase from Hugging Face. You can check out the full keynote below.